How to Get Started

Go from sign-up to discovering the behavioral model of your software in four steps.

1Sign up and get an API key

Your first step is to obtain an Imandra Universe API key, which gives you access to SpecLogician and other tools in the Imandra Universe ecosystem.

2Install locally

Includes Python 3.13

Install SpecLogician locally — the script sets up uv and Python automatically so your workspace can build models, discover artifacts, and run analysis.

3Install the extension

The extension works with your local installation to visualize states, transitions, graphs, scenarios, and related artifacts directly in your editor.

With SpecLogician installed, point your coding agent at the service you care about. It can discover the model, inspect scenarios, and reason about behavior instead of guessing from local code alone.

Why AI Coding Needs Mathematical Context

LLM coding agents are powerful code generators, but their reasoning is fundamentally local. As systems become more stateful, integrated, and policy-heavy, the number of meaningful behaviors and edge cases rises quickly — while the amount of context an LLM can reliably reason over does not.

As edges grow, so does the space of behaviours an agent must reason about. Without a formal model, this space is opaque.

The number of meaningful behaviours grows combinatorially with system complexity — making prompt-local reasoning increasingly unreliable.

This matters most in enterprise and regulated environments, where teams need precise reasoning about behavior, assumptions, compliance, and change impact.

The missing piece is not more generated code. It is a way to discover the behavioral model already embedded in the system and use it as a stable reasoning reference.

- Large, evolving codebases with many interacting components

- Long-lived business logic and policy-heavy behavior

- Auditability, compliance, and controlled change

- High operational, legal, or financial cost of subtle mistakes

Discover Hidden Paths, Gaps and Change Effects

SpecLogician helps developers and AI agents discover behavioral structure that is hard to see from local code alone — then use it for testing, migration, change review, policy-heavy engineering, and semantic observability.

Discover test gaps and uncovered behavior

Discover hidden paths before migration

Discover change impact before merge

Discover policy conflicts and exception paths

Discover drift across requirements, tests, and logs

Connect observability to system semantics

How Agents, SpecLogician, and ImandraX Work Together

When prompt-local reasoning is not enough, LLM coding agents use SpecLogician to discover behavioral structure, refine it into mathematical context, invoke reasoning, and act on the evidence.

How SpecLogician Discovers the Model

SpecLogician helps LLM coding agents discover states, transitions, predicates, and scenarios from the artifacts that already exist in a project.

Requirements

Source code

Scenarios

Tests and logs

Operational traces

Predicates, transitions, and state

How the Specification Is Constructed

In SpecLogician, the specification is a shared mathematical model discovered from the system and refined into a form that can be visualized, reasoned about, and kept aligned over time.

SpecLogician uses ImandraX and the Imandra Modeling Language to discover and represent system behavior explicitly, then reason over that specification to check consistency, find edge cases, generate high-value tests, and connect runtime evidence back to system semantics.

Domain model

type state = {

logged_in : bool;

login_count : int;

}

type action =

| Login

| LogoutPredicates and transition

let step (s : state) (a : action) : state =

match a with

| Login ->

{ logged_in = true;

login_count = s.login_count + 1 }

| Logout ->

{ logged_in = false;

login_count = s.login_count }

let is_logged_in (s : state) : bool =

s.logged_in

let has_logged_in_at_least_once (s : state) : bool =

s.login_count > 0A single scenario

Example scenario

Given: the user is logged out

When: a Login action occurs

Then: is_logged_in becomes true and has_logged_in_at_least_once holds

State describes what the system currently knows.

Actions describe events that can change the system.

Predicates describe properties we want to reason about.

Scenarios turn intended behavior into analyzable structure.

Real systems scale this up by linking many such domain models, predicates, scenarios, requirements, tests, logs, and traces into one evolving mathematical specification, then using ImandraX to reason over it.

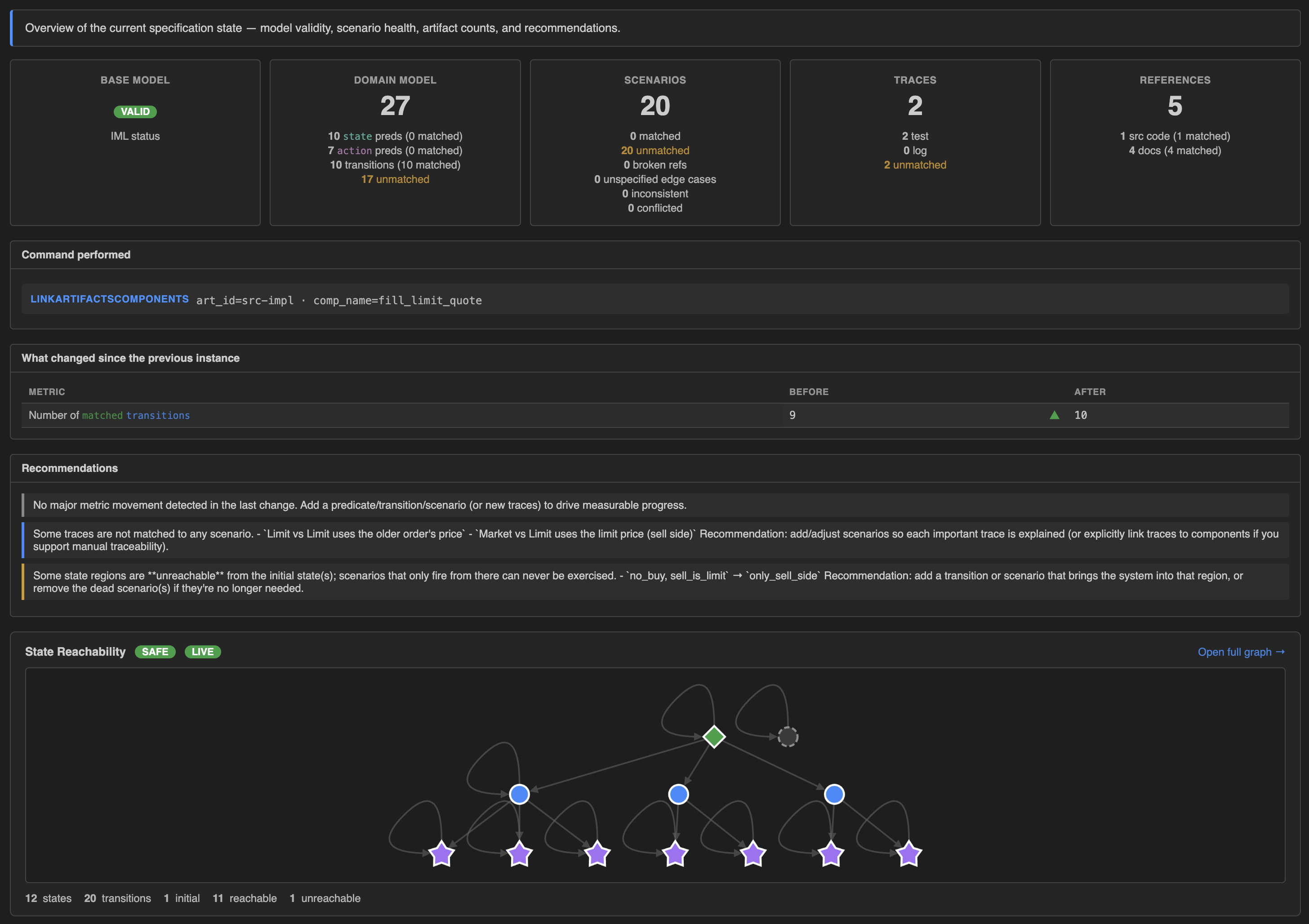

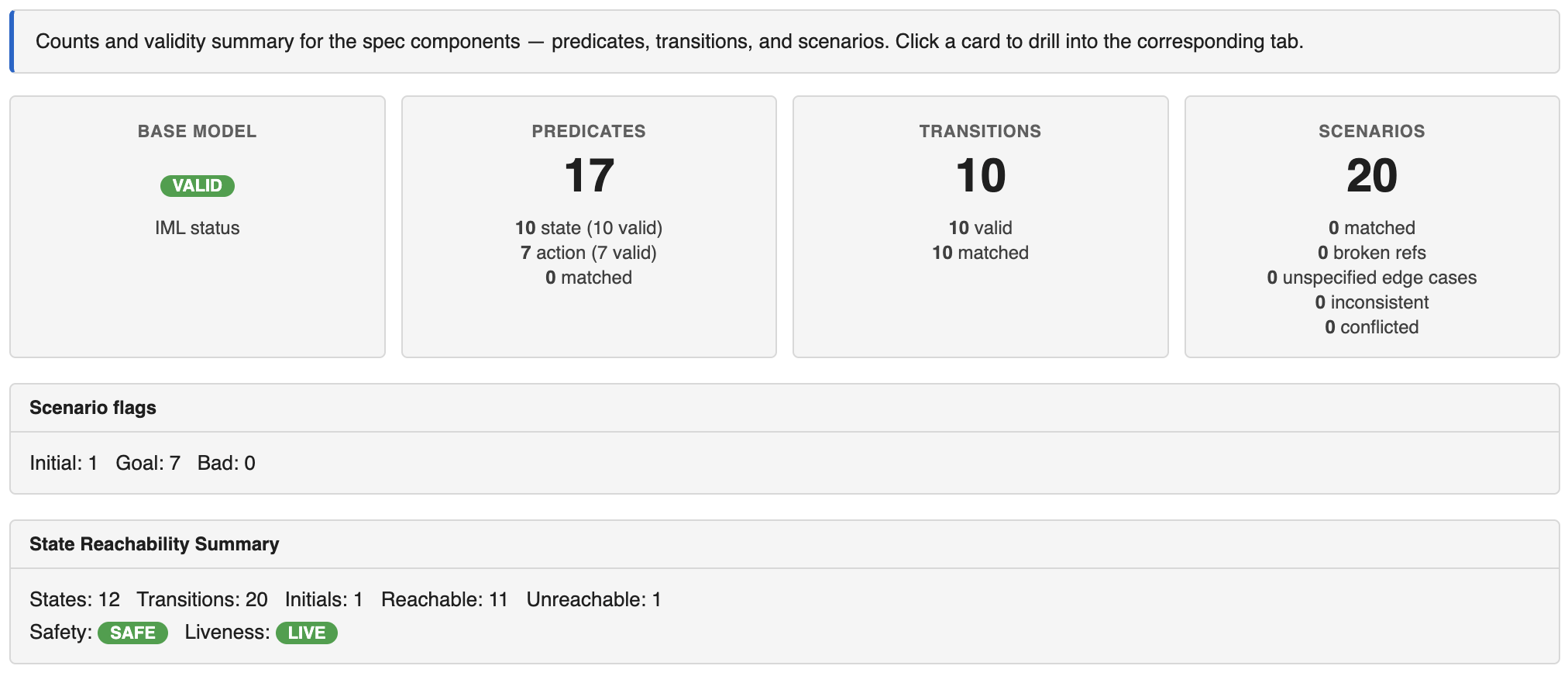

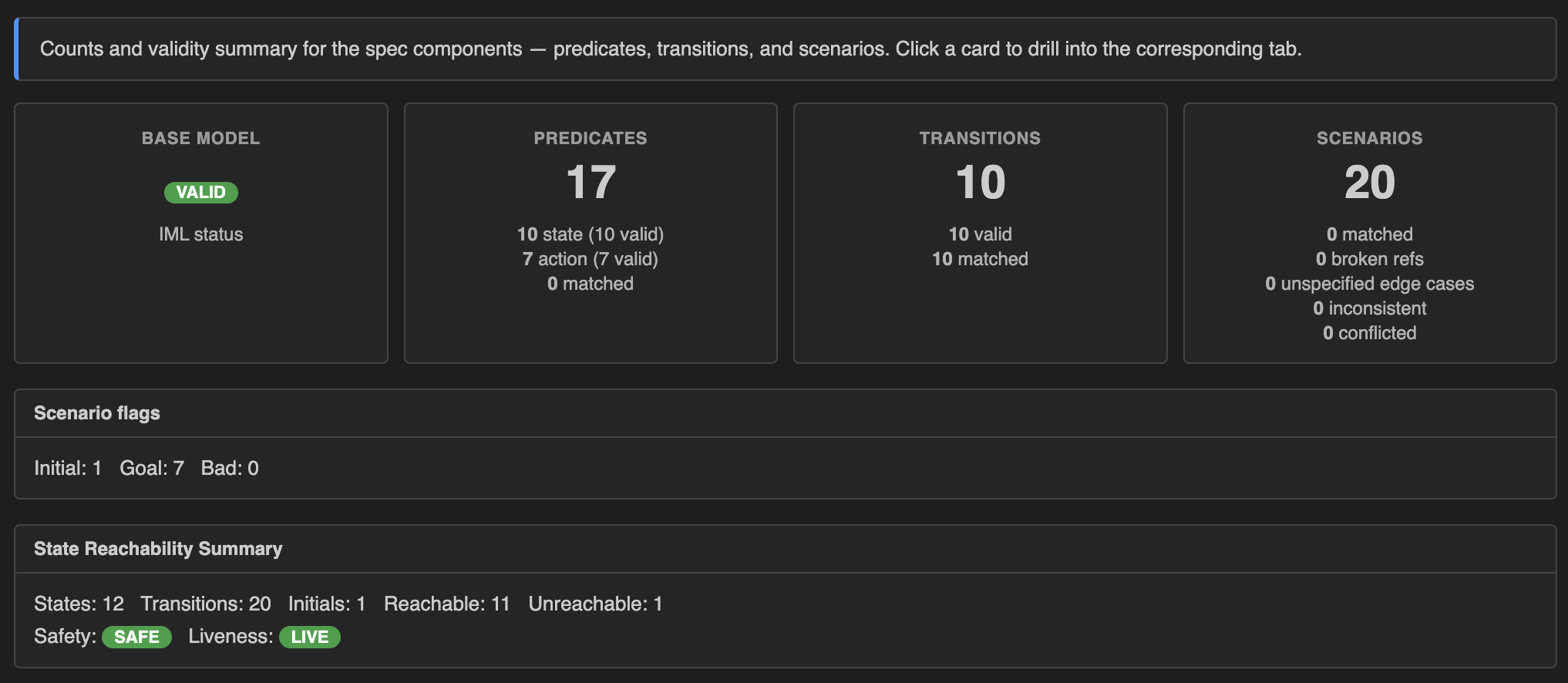

Live in the dashboard

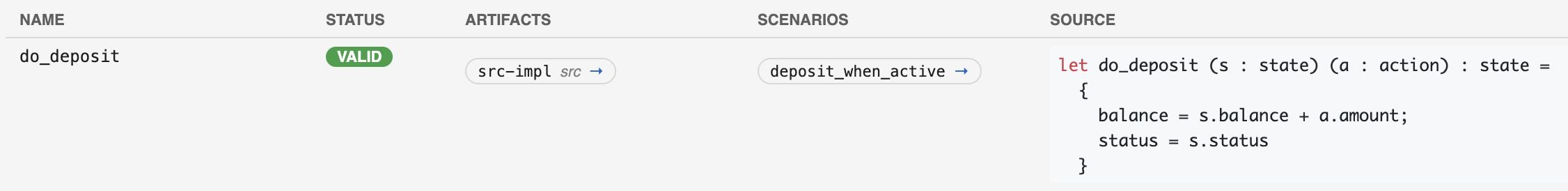

As the model grows, the dashboard renders it live — an aggregate summary, every transition with its artifacts and source, and every scenario with its predicates and links.

Summary tab — validity, counts, scenario flags, reachability.

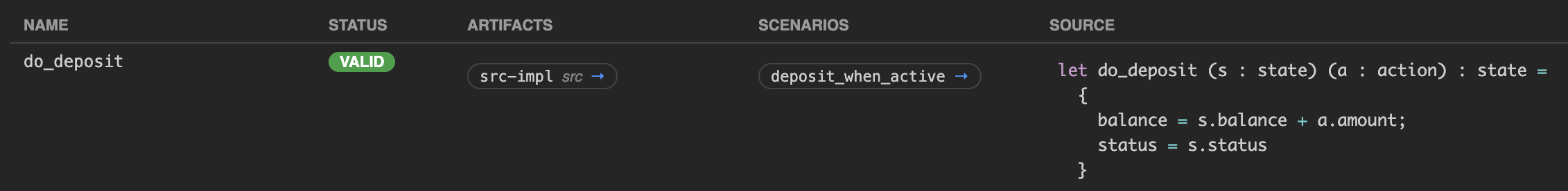

A row from the Transitions tab — name, validity, linked artifacts, scenarios, source IML.

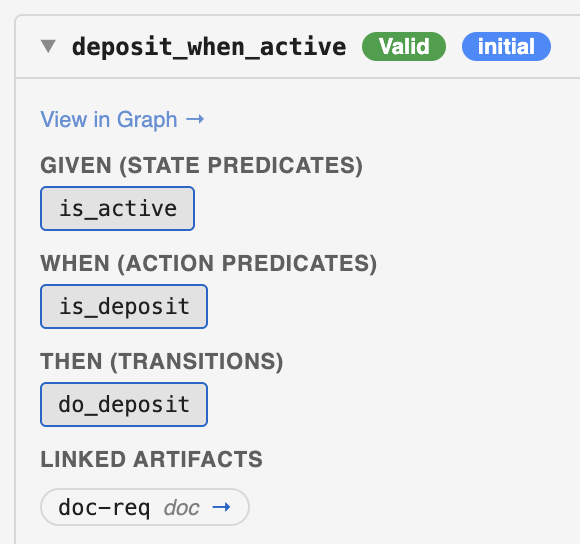

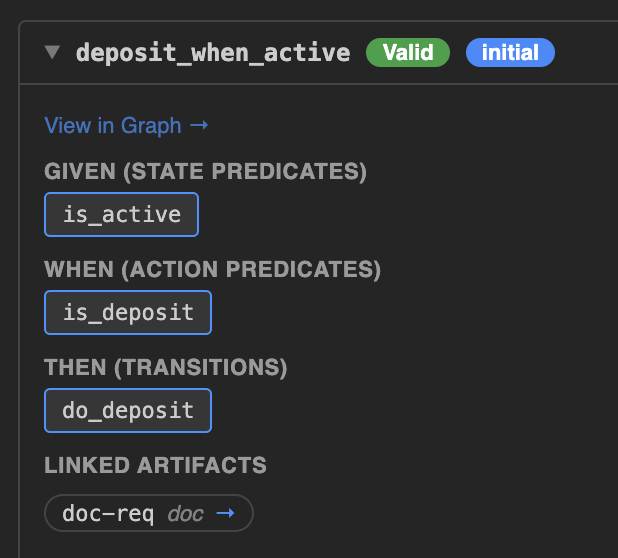

A scenario card — given / when / then chips and linked artifacts.

What Mathematical Reasoning Takes Place

Once SpecLogician has helped developers and LLM coding agents discover shared mathematical structure, they can invoke automated reasoning over the model.

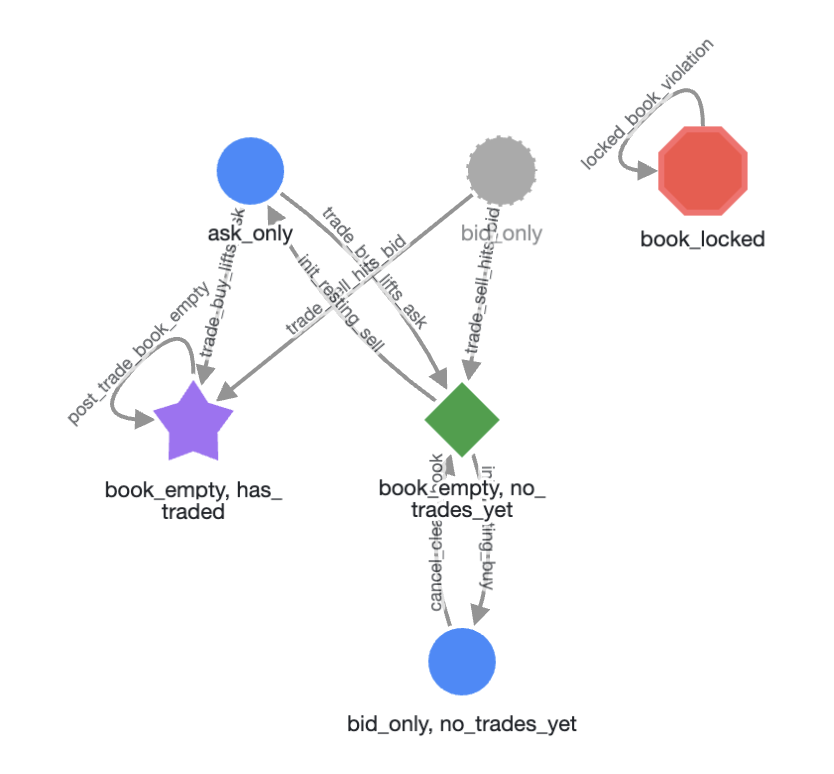

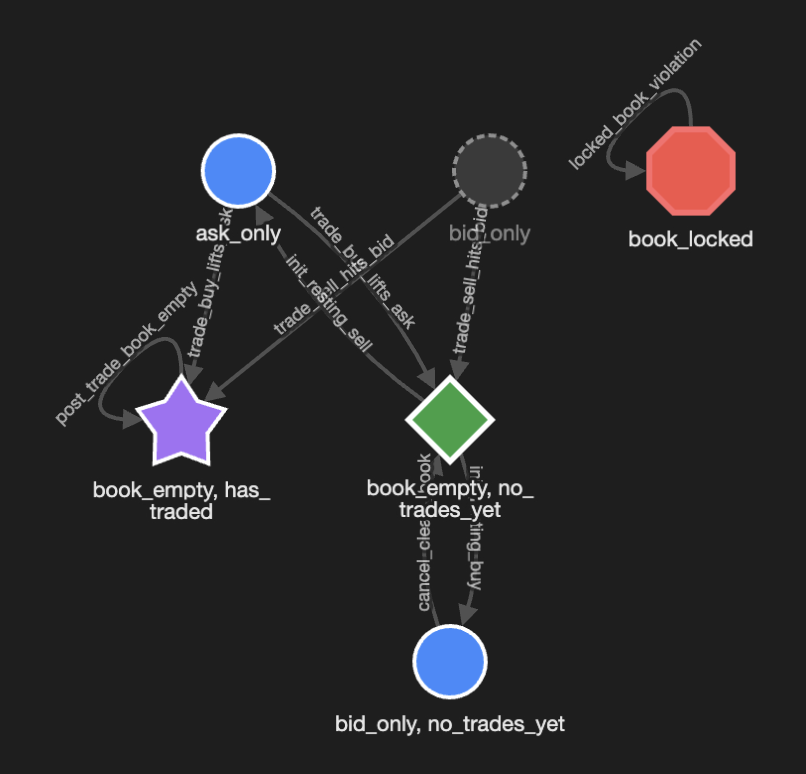

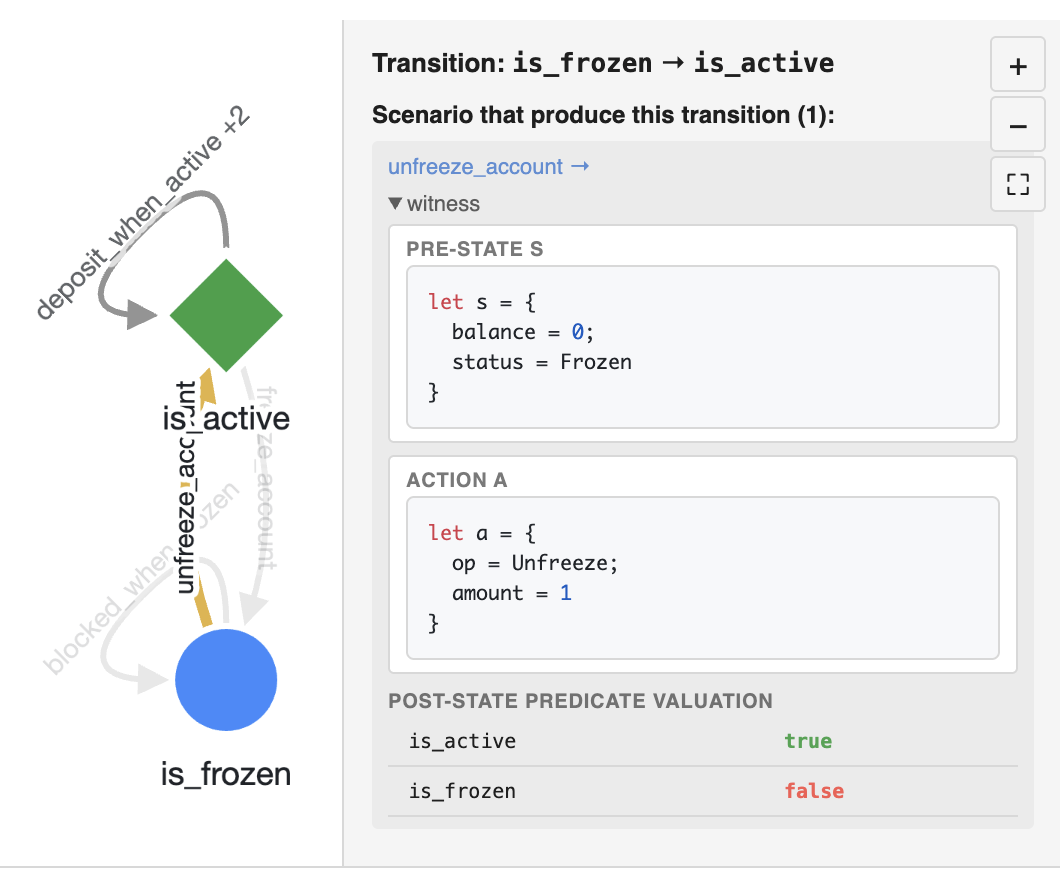

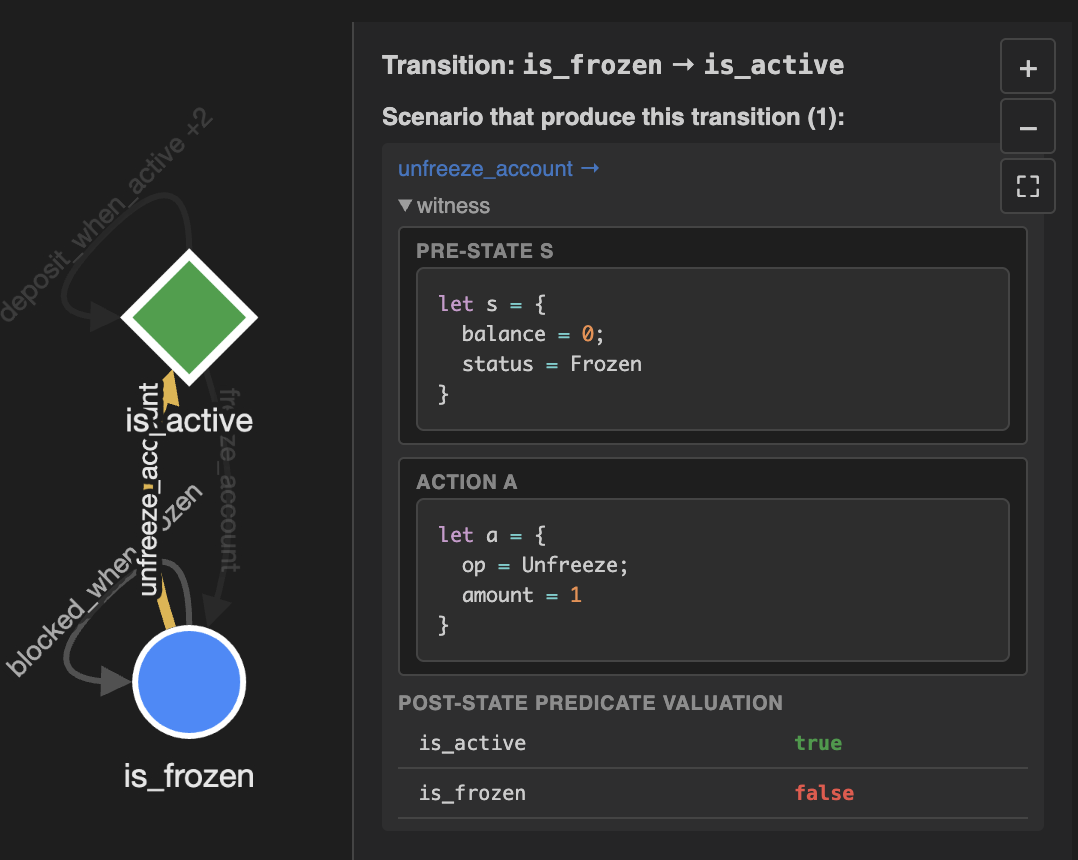

Reachability graph — nodes are state regions, edges are scenarios.

Click an edge for the concrete witness — pre-state, action, post-state.

Consistency checking

Reachability analysis

Contradiction detection

Edge-case discovery

Counterexample generation

Coverage and test generation

Built for Controlled Change, Compliance, and Safety-Critical Delivery

The techniques behind SpecLogician come from years of applying mathematical modeling and automated reasoning in large, heavily regulated organizations.

These are environments where teams need to deliver safety-critical software with confidence, support audit and governance, reason precisely about policy and edge cases, and extract actionable intelligence from complex software behavior.

Because the model can be refined using logs, traces, and operational evidence, SpecLogician helps teams connect formal reasoning with what the system is actually doing in production.

SpecLogician brings that proven discipline into an LLM-powered workflow. This is not a new process invented for AI coding — it is the operationalization of years of logic-first engineering practice into a system that AI agents can participate in.

Discover, observe, and reason about your software

Use SpecLogician to help developers and LLM coding agents discover the mathematical model implicit in requirements, source code, scenarios, tests, logs, and traces — then use it for reasoning, verification, semantic observability, and test generation.

Sign up for an Imandra Universe account to get your API key and start reasoning about your software today.

Start now